I spend quite some fraction of last week on something which is not really related my project: trying to make cross-validation possible for multi-class MKL (multiple kernel learning) machines using my framework from last year’s GSoC. To this end, I added subset support to SHOGUN’s combined features class; and then went for a bunch of bugs that prevented it from working. But it now does! So cross-validation should now be possible within a lot more situations. Thanks to Eric who reported all the problems.

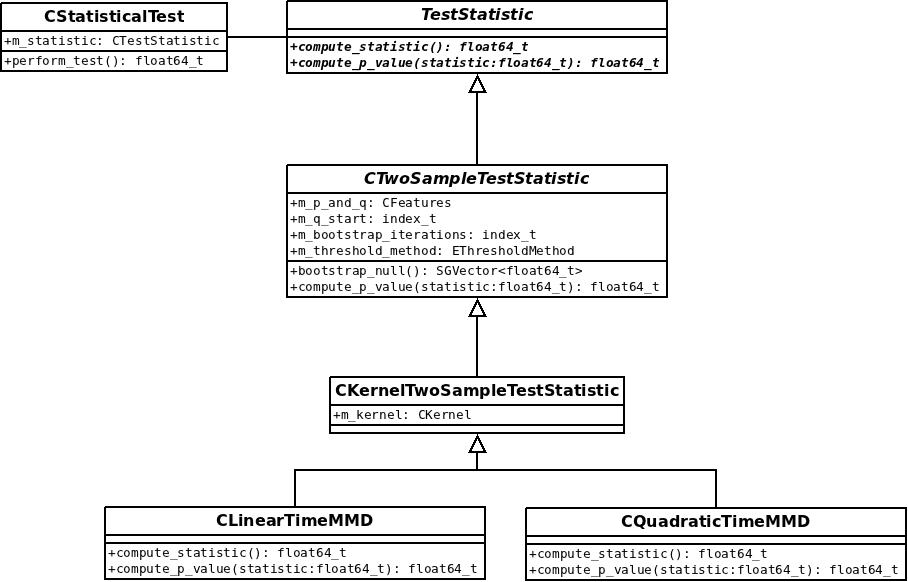

Apart from that, I worked on documentation for the new statistical testing framework. I added doxygen class descriptions, see for example CQuadraticTimeMMD. More important, I started writing a section for the SHOGUN tutorial, a book-like description of all algorithms. We hope that it will grow in the future. You can find the \(\LaTeX\) sources at github. We should/will add a live pdf download soon.

Another minor thing I implemented is a data generator class. I think it is nice to illustrate new algorithms with data that is not fixed (aka load a file). The nice thing about this is that it is available for examples from all interfaces — so far I implemented this separately for c++ and python; this is more elegant now. I bet some of the others projects will need similar methods for their demos too; so please extend the class!

This week, I will add more data generation methods to the generator, in particular data that can be used to illustrate the recently implemented HSIC test for independence. Reference datasets are quite complicated, so this might take a while. Another thing we recently changed is a new framework for unit-tests, so I will write these for all new methods I created recently.